Modelling &

Simulation

A vision of the state of play

By ITEA Vice-chairman Philippe Letellier;Co-signed by: Thomas Bär (Daimler), Daniel Bouskela (EDF), Patrick Chombart (Dassault Systèmes), Magnus Eek (Saab), Martin Benedikt, Martin Krammer (Virtual Vehicle/ Modelica Association), Martin Otter (DLR/Modelica Association) and Klaus Wolf (Fraunhofer SCAI)

To be or not to be … that is not the question

In today’s market developments in simulation are being driven by a number of trends. These can be summarised as:

- Optimisation of product and production line design

- Production line command & control for efficiency, quality and security

- Better understanding of how end products are actually used

- Dynamic personalisation of the end product

At the heart of these trends is digitalisation. This encompasses two main dimensions: real data acquisition through IoT from the physical world of the product in operational conditions (the real world) and modelling & simulation of the product to forecast its usage and efficiency as well as modelling & simulation of the production lines, in relation to the product itself. It is at the intersection of these two dimensions that we are building the connected digital twin of our physical world to enable us to create a betterinformed design as well as efficient command & control to ensure productivity and quality. Importantly, it also means that the environment for human-machine collaboration (the use of cobots) can be made more safe. The upshot is that you acquire a flow of information that helps you to understand how the product is actually used along with the associated new trends in usage, something that is the heart of the agility approach that, in turn, enables the product to be dynamically adapted to the actual demand/ use.

So the question of whether or not we have to establish these kinds of digital twins is not really relevant any longer. The question is more how do we design such complex digital twins and how can we increase the precision of a digital twin that, after all, remains just an image of the reality. ITEA understands how simulation is becoming more and more relevant and important, and has invested a lot in it, gaining great successes and significant impact on standards and business.

Coupling the small and the large

When it comes to software engineering, the normal first step to take is modelling. Then, when you become confronted with complexity, you move towards modularity. The present situation is already that no one is able to gather all the knowledge needed to model a product in all its dimensions. As an example, in the automotive industry the 3D model of the car has been around for many years now. But if you are a premium brand, you will want to master, for example, the sound the car door makes when it is slammed shut. It helps differentiate a Mercedes or BMW, for instance, from a SEAT or a Dacia. The customer expects a certain quality of ‘clunk’. However, to reach a good level of realism, a simple model is not enough. It requires in-depth knowledge on audio. An innovative SME has invested a lot of knowhow to build this kind of simulator and while it remains a very small market, a niche, it is rather rewarding. For the major 3D model players it is not really reasonable to invest in such in-depth knowledge given the size of the market. Yet where the customer wants the 3D model and the car-door slamming model, they are duty-bound to collaborate in an interoperable way, with a multi-physics approach.

Rising to the challenges

The first ITEA project that worked on this kind of challenge was MODELISAR. It aimed to ensure that a complex model can be designed on the basis of car sub-systems, independent of the modelling tools and langages. To this end they designed the concept of the Functional Mockup Unit (FMU), and its associated Functional Mock-up Interface (FMI) to allow the exchange of behavioural models between FMUs and ensure interoperability. This FMI has nowadays become a worldwide standard of the Modelica Association used by all the main industries. For instance, over 140 tools support FMI, with both big software editors, SMEs and academic solutions. There is a continuous momentum to support and enhance the standard in a permanent FMI project that includes several Working Groups in liaison with the numerous successor projects: OpenCPS, VMAP,ACOSAR and MOSIM. But rather than closing a problem with a solution that would have been already a very good success, related to the application domains, Modelisar's successful FMI standard was exploited in further industry landscapes. For instance, it has ventured beyond the initial Automotive domain to satisfy similar needs for interoperability that exist in the Aerospace and Energy domains.

Compatibility and interoperability

After the FMI step, which has allowed modularity in the design of complex models, the question of ensuring compatibility and interoperability between the previous existing design tools that nonetheless remain useful on a daily basis was a challenge taken up by the OpenCPS project. A recap on the state of play: The Modelica Association traditionally ‘housed’ the modelling experts family responsible for developing many of the solutions and tools whereby FMI was the agreed standard for allowing the exchange of models between simulators. The SSP (System Structure and Parameterization) Working Group initiated by ZF, based on a workshop of ZF, Bosch and BMW, designed a new standard, under the auspices of the Modelica Association, to integrate and interoperate different FMUs and their parameters using the FMI standard. UML (Unified Modelling Language) and SysML (Systems Modelling Language) are key standards for designing software solutions and system architectures. OpenCPS focused on the interoperability between these different standards, developing the OMSimulator (an open-source industry grade FMI and SSP master simulation tool) enabling FMUs to be imported, connected and simulated in an efficient and standardised way. OMSimulator is the first tool to integrate FMI, SSP and the Transmission Line Method (TLM) – a well-established technique for numerically robust distributed simulation. In addition, successful SSP-based roundtrip engineering between the simulation and architecture domains have been demonstrated in the project.

Virtual material modelling …

While the efforts of OpenCPS focused on system simulation, there is another physical level for simulation

if you want to take into account the property of materials themselves. This was the focus of the VMAP

project.

VMAP worked on a new Interface Standard for Integrated Virtual Material

Modelling in the manufacturing industry where the state-of-the-art in the exchange of local material

information in a Computer-Aided Engineering (CAE) software workflow is not standardised and raises a lot

of manual and case-by-case implementation efforts and costs. To be able to facilitate the holistic

design of manufacturing processes and product functionality, knowledge of the

details and behaviour of the local material is required. The VMAP project therefore targeted the

acquisition of a common understanding and interoperable definitions for virtual material models in CAE

and the establishment of an open

and vendor-neutral ‘Material Data Exchange Interface Standard’ community to carry the standardisation

efforts forward into the future.

… and integration

But the project had a challenge to overcome: how to interface a system simulation as described by FMU, FMI and SSP with some model of material behaviour as described in the VMAP format. VMAP’s answer was to enable the interaction of detailed 3D Finite Element Analysis (FEA) codes to model parts of a complete production line. An example in the composite domain could be (step 1) draping simulation g (step 2) curing analysis g (step 3) moulding simulation g (step 4) noise, vibration, and harshness (NVH) product analysis. Each of these FEA models will run for hours even on larger high-performance computing systems. In order to integrate these two levels of modelling, a three-phase approach emerged. Run detailed coupled FEA workflows and validate each single step and the coupled analysis so that the ‘heavy’ FEA models can be reduced (by numerical methods or AI & data-driven approaches) to systems models, in Modelica language for example. Such systems models can then be integrated into an FMI-based systems workflow – and finally even be used to realise online control of production.

Industrial process

But this does not mean ‘job done’ yet because when you are able to set up such a sophisticated model, you are faced with the challenge of integrating it in an industrial process. This is exactly the problem that the ENTOC project wrestled with, working on how to gather the models of the different components of a production line to allow industrial engineering. The result was a components behaviour standardisation for production line engineering coupled with a safe marketplace approach managed by trusted tiers that ultimately allows FMU to be integrated in virtual engineering.

From model to application

Once you have been able to establish such sophisticated models, many applications present themselves. One such key application is to enhance the test phase, which is always long, costly and agonising. AVANTI reused FMI to test the production line through virtual commissioning while ACOSAR developed standardised integration of the distributed testing environment, including hardware in the loop.

ACOSAR specified and standardised a communication protocol to integrate different distributed components. It is known as Distributed Co-simulation Protocol (DCP). ACOSAR included a model-based methodology to integrate modules, compatible with FMI. One of the key aspects of ACOSAR in developing the DCP was that starting from simulation, a pure virtual prototype, the first pieces of hardware might become available. So single simulation models were replaced with real components, and the same or similar experiments were done again. Following this approach, more and more models were successively replaced. The virtual prototype then became more real, until the actual product emerged. The DCP supports this approach, because it can be used

- by simulation tools, for pure numerical calculations

- by hardware-in-the-loop setups, integrating models with target hardware

- by real-time systems, for full real-time co-simulation experiments on large test rigs, for example.

The DCP was designed for real-time operation, with a very low or no communication overhead, and implementations can be done with a low memory footprint. Therefore it is highly suitable for real-time communication. However, the actual real-time performance depends on the underlying transport protocol running on a communication system.

Safe cobot environment

Another application revolves around the safety of human workers in the context of a production line that incorporates many cobots. The challenge is not to do with the robot component – we know more or less the exact movement of the robots through their simulation – but more the movement of the human workers that have to interact with their robot counterparts. How can we take account of that? This is exactly the challenge covered by the MOSIM project.

To deal with this issue, MOSIM developed what it refers to as a Motion Model Unit (MMU), and a Motion Model Interface (MMI) directly inspired by FMI. It allows the connection of heterogeneous digital human motion simulation. In MOSIM the production line was modelised with Modelica, FMU, FMI, UML, and the human motion with MMU and MMI. Then the two simulators become immediately interoperable to support integration in the production line. The modelisation of rules helps protect the safety of the workers. MOSIM is currently focusing on human motions only, but the AIToC project (whose Full Project Proposal has been submitted) intends to address the next logical step combining robots and worker (“hybrid workplace”) whereby the incorporation of AI is envisaged.

Situational awareness

Nowadays, many systems include control software that requires better awareness of the environment and systems to be controlled. An ITEA project aiming to tackle this issue is EMPHYSIS. Still with another year to run, EMPHYSIS wants to bridge the gap between the multi-physics complex modelling and the embedded software in vehicles. In this context, a derivation of the established FMI standard is being created to describe new interfaces and the interoperability specifications between the tools used for modelling/simulation, embedded code development, quality checking, testing and integration in ECU (Electronic Control Unit). eFMI is expanding here in a new domain, with the commitment of more than 25 partners along with automotive OEMs & Tier 1 suppliers.

Project bites

- Modelisar FMI is the seminal standard to exchange data between different simulators to build complex simulators from different simulators

- MOSIM Motion Model Unit (MMU) and Motion Model Interface (MMI) directly inspired by FMI Allows connection of heterogeneous digital human motion simulation

- ENTOC Components behaviour standardisation for production-line engineering Integrating FMU in virtual engineering

- AVANTI Reuse FMI to test the production line through virtual commissioning

- ACOSAR Standardised integration of distributed testing environment

- VMAP Vendor-neutral standard for CAE data storage to enhance interoperability in virtual engineering workflows

Future evolutions

The domain of simulation is very fertile and warrants some major investment in R&D. If we look at the trend in digital twinning, there are clear benefits for design automation given the substantial data that is acquired during the physical testing. Such feedback can be used to evaluate the system model performance. In addition, use can be made of investments in digital twinning at various levels for detecting anomalies in engineering, long-life maintenance and product adaptation to different kinds of use.

Questions will arise, for example concerning quality assurance on the simulation stack. How can V&V (verification and validation) standards be efficiently integrated with the simulation approach in industrial settings, and with a high level of automation? And then you have to look at the complete picture concerning simulation governance, credibility assessment, the issue of traceability, and how to define and objectively measure the fidelity of a model. This requires a kind of model analytics. Which combination of model fidelity is needed to enable a specific intended use of a connected set of models?

While Modelica language is already a standard, industry still needs to push for increased interoperability between Modelica tools. This requires further standardisation of the Modelica language itself as well as standardisation of model encryption.

It should be realised that simulation is very much at the heart of the corporate value, but companies are unwilling to take the risk of becoming locked in to a tool vendor, especially for the heavy industries that manage product lifecycle in terms of decades.

Another open question arises when considering the cases where virtual commissioning is sufficient and where hardware in the loop is required. ACOSAR worked on the integration of hardware in simulation environments, and delivered the DCP as well as defined an accompanying integration process. Currently there is a strong demand for a reduction in test cases aimed at saving time and money so it is important to think about cases where it makes most sense to front-load testing activities, and perform mixed real-virtual simulations. Typical applications can be found in the field of automated driving. Confidence in self-driving vehicles can only be established by driving reasonable amounts of test kilometres, which can only be achieved through simulation. And here lies the possibility to integrate new sensors, actuators and controls. Where complexity is an issue, virtual commissioning tends to be most used for some areas (such as robot cell) of a production line since the hardware of the whole production line is also a step-by-step accumulation process. Virtual components are also replaced by real hardware in the same kind of gradual process.

Another direction is better integration of modelling and simulation to systems engineering processes in order to facilitate innovation (open the solution space) while rigorously ensuring compliance of design with respect to all requirements. This is the goal of the ITEA EMBrACE project that has just started and aims to develop a new standard for the modelling and simulation of assumptions and requirements. Indeed, safety critical systems cannot be commissioned and operated if one cannot prove that they strictly comply with all requirements, in particular those expressed by the safety authorities. This is a striking example where the proof of the correct functioning of the system, using a justification method efficiently supported by a modelling and simulation framework, is as important as the system itself.

It is clear that these new R&D topics must be handled in an open and standardised spirit so there will be a need for compatibility among all relevant engineering standards. The EMMC (European Materials Modelling Council) is a very strong initiative driven by the EC and aimed at the digitisation of materials and manufacturing information, with work increasingly focusing on the very fine levels like micro, nano or even atomic.

Conclusions

This domain of modelling, simulation and digital twins has progressed a lot during the last few years. In particular, ITEA has been a key player in accelerating the evolution and impact at a global level through standardisation which is now being deployed in the markets. We are proud of these results, but the game is not over and already many new challenges are emerging that will make these first results even more impactful. Join the game and continue to transform the world with its digital twin. ITEA, the twin-headed programme that handles the concrete reality with digital models.

Other chapters

Use the arrows to view more chapters

Editorial

by Zeynep Sarılar

Country Focus: Austria

One-stop shop accelerates Research facilitator industrial R&D

SparxSystems Central Europe

Innovation sparks – with an x!

ITEA Success story: BENEFIT

Advancing evidence-based medicine for better patient outcome

ITEA at Smart City Expo 2019

Bringing together Smart City Challenges and Smart City Solutions

Community Talk with Herman Stegehuis

Harvesting the fruits of one’s labours

End user happiness: Panacea Gaming Platform

Setting a world standard in gaming for special populations

ITEA Success Story: ACCELERATE

A go-to-market acceleration platform for ICT

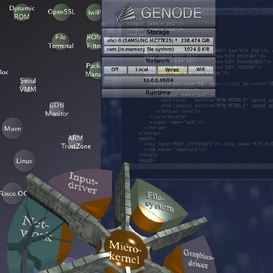

SME in the spotlight: Genode labs

Where fantasy creates a new reality

Cyber Security & Cloud Expo 2020

Continuing to focus on customer orientation

Modelling & Simulation

A vision of standards and state of play