Metaverse1

The missing link between the real and the virtual

Pokémon GO, a location-based augmented reality game, became a real hype in the summer of 2016, generating over USD 1.2 bn in revenue and 752 m downloads globally already by July 2017, as reported by VentureBeat. And at CES2018, Nvidia expressed its expectation that 50 million VR headsets would be sold by 2021.

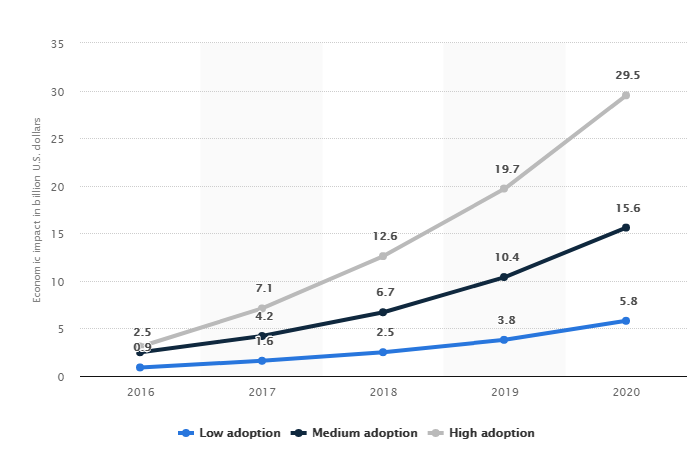

Promising figures for a market with a high growth potential, according to a Statista study showing the projected economic impact of virtual and augmented reality technologies worldwide from 2016 to 2020 (in USD bn). In the high-adoption scenario, the economic impact of VR/AR is forecasted to amount to USD 29.5 billion in 2020.

But let’s go back in time. Ten years ago, virtual worlds were already found in serious computer games and simulation models. However, they were mostly standalone and independent of each other with little or no connection to the real world. The ITEA project Metaverse1, initiated by Jean Gelissen from Philips and Yesha Sivan from MetaverseLabs, which successfully ran from October 2008 to March 2011, set out to overcome this isolation – defining a standard to enable connectivity and interoperability between virtual worlds and with the real world. The objective was to define interoperability in such a way that it would be possible to exchange information between worlds. Even more important was the development of a standard interface between the real physical world and the virtual – simulation/ serious games – world. This made it possible to attach real world sensors, such as body parameter or environmental sensors, to provide input to simulations or alternatively obtain feedback from such models into the real world, for example to control lighting, temperature or ventilation or for personal wellbeing.

Many of the technologies required were not new, but it was necessary to identify what was missing and develop suitable solutions. Some 18 missing items were defined, and the necessary technologies developed. Among the missing items were:

- Being able to transfer data and actions between systems in terms of available sensor signals to avoid clicking a mouse and keying in information;

- Feeding real-time 3D video streams into a virtual world;

- Providing support for multiple languages – crucial in social contexts;

- Support for inclusion of real audio input – for example taking original sounds such as fountains or the beach and integrating them into a virtual tourism application.

A signal for action

A key outcome was an international standard within the ISO/IEC Moving Picture Experts Group (MPEG). The first version of the ISO/IEC 23005-1:2010 (MPEG-V, Media context and control) standard was published in January 2011. MPEG-V defines boundary conditions, but the real added value is in the applications – transforming the signal into something useful. MPEG-V is applicable in various business models/domains for which audio-visual contents can be associated with sensorial effects that need to be rendered on appropriate actuators and/or benefit from well-defined interaction with an associated virtual world. Currently the 4th edition of this standard is under development and Elsevier Inc. published a book on the standard in 2015 (https://www.sciencedirect.com/science/book/9780124201408#book-info).

In the Netherlands, the start-up uCrowds, which offers a model for simulating crowds in big infrastructures, events or computer games, has its roots in this project. The founder of the start-up was an associate for Utrecht University in the Metaverse1 project and co-developed a planning method which formed the basis for the crowd simulation framework. This software is already used to investigate the amount of time it takes to evacuate several metro stations of the North/South metro line in Amsterdam and to analyse a large range of scenarios that could occur during the Grand Départ of the 2015 Tour de France in Utrecht, as the city wanted to know whether the crowd would be safe should the city draw anywhere from 600,000 to 800,000 spectators.

"Metaverse1 set out to overcome isolation by defining a standard to enable connectivity and interoperability between virtual worlds and with the real world."

Enriching the cultural experience

In the case of Innovalia, the Metaverse1 project has been a key step to create new business, products and services related to 3D modelling and virtual tourism. Within the Metaverse1 project, Innovalia Spain (in association with other partners from the consortium) developed a new island in Second Life, which represents the hot spots of Gran Canaria Island. Second Life is a virtual world launched in 2003 with 57 million accounts created in 15 years and 350,000 new registrations on average per month, from about 200 countries around the world. One of the most important products developed in the Metaverse1 Virtual Island was the “Virtual Museum”, where users could get access to cultural information in real time. Thanks to this virtual product, in 2012 Innovalia developed a new platform for the personalisation of cultural routes in museums based on the personal preferences of each individual user, which has been used by 3 museums located between Basque Country and Tenerife. Afterwards, and after developing this concept further, in 2014 Innovalia launched a new mobile application in the cruise tourism sector, eGuide providing an innovative solution for short breaks in a city. The app is already working in the city of Santa Cruz de Tenerife, one of the top five cruise ports in Spain. Based on eGUIDE, Innovalia has developed another app for fairs and congresses: iSANCHO, used in more than 50 events in just one year.

After finishing the project and aiming at bringing the results to the market, Innovalia launched a new company, KUMO Technologies. It was a start-up company seeking to forge breakthrough technologies to eliminate entry barriers to the high-quality 3D market. KUMO was born with the aim of creating 3D models of high fidelity and quality. Today, KUMO employs over 25 high-skilled workers, is technology provider of large companies such as ORANGE, develops high-tech projects On the five continents and cooperates with the top researching international centres in 3D. In fact, KUMO is one of the success cases within the Global FIWARE universe. One of the last achievements of KUMO is the development and commercialisation of its own Big Data visualisation tool and its innovative application in arts and culture in several creative projects worldwide.

Based on the project results, the INRIA Talaris team in France developed multilingual tools, which made the tourist’s experience in a virtual world even more interesting by adapting the immersive 3D to the language selected by the user. LORIA / INRIA Nancy - Grand Est contributed and lead the definition and specification of the Multi Lingual Information Framework standard (MLIF) [ISO DIS 24616] which was confirmed in December 2017.

More information

More information

Download Metaverse1 Success storyRelated projects

Metaverse1Organisations

INRIA (France)Innovalia (Spain)